Now entering the field site (architecture at the Chandler-Gilbert Community College in Chandler, Arizona)

This past fall, I had the opportunity to teach two classes of Introduction to Solar System Astronomy at Chandler-Gilbert Community College, part of the Maricopa Community College system in the Phoenix metropolitan area. As it has been almost ten years since I last taught in a physical classroom, I wasn’t sure how much of my digital toolkit would translate back into that setting. What did and did not work during the semester gave me some interesting insights into the optimal use of digital technologies in a lab course.

When I first started the semester, I fell back on what I had utilized successfully prior to starting down the digital science education pathway … paper labs. My paper labs follow a logical sequence, starting with assumptions and preconceptions, moving on to observations, and followed by analysis. This is slightly different from typical labs, which have quality observations as the starting point of science. We would like to think so, but they aren’t … observations are driven in large part by our interests and preconceptions about how the universe around us works. My labs bring these out into the open so that I can better understand where my students are starting from and, eventually, giving me greater insight into why they are making the mistakes they are making later in the lab. After explicitly outlining their preconceptions and assumptions, student then proceed to observations, do some basic quality control checks on those observations, then utilize those observations in higher level work. That higher level work includes building models, using them to make predictions about future observations, and then using those new observations to modify their models. The end goal is to build up to a very basic version of the scientific consensus on the topic we are studying, rather than just telling students what it is during a lecture.

In reality, of course, this didn’t quite work out the way I had hoped. The general approach of most students was to slam through the questions as quickly as possible. I found students rapidly diverging on unexpected tangents, making critical errors in setting up and recording observations, blowing off the validation step (or more often assuming that they had done everything correctly and didn’t need to validate), and writing down nonsensical interpretations. Each of my classes had 24 students, divided into 6 groups of four that I needed to keep tabs on. As I reviewed students’ work, I would catch a lot of these mistakes as they were happening and then work with that group to rectify these errors to get them back on track. But as I did that, another group would get off-track and by the time I got to them, we had to backtrack significantly to correct earlier errors. Providing meaningful real-time feedback to six groups as they made (often) the same errors was difficult. By the time I got around to some groups, they had finished up the questions, turned in the labs, and departed. That left me with a mess to evaluate and grade. Ideally, I would have gone through each lab and provided individual commentary on each question for each student. But there wasn’t enough time and it quickly became tiresome to hand-write the same comment 48 times. The best I could muster was a review during the next class period on what the general mistakes were, what the correct solutions were, and how to derive them. Students failed to see that this was a learning opportunity and mostly zoned out. With written commentary coming weeks after the learning moment had passed, what was the point? All the important learning moments were getting missed.

This is the key problem in many lab courses. Lab settings provide a significant number of learning opportunities, but we are not taking advantage of them. Often we can’t because of the limitations of our tools. Standard laboratory exercises, often done with paper and pencil, don’t have a mechanism for flagging incorrect or incomplete understanding in real time and students often have too little experience to realize that they’re completing tasks incorrectly. An attentive instructor can catch these errors as they happen and help students think their way through to the correct solutions, but there is a limit to how many students that even the most attentive instructor can assist in a given lab period. After-the-fact grades and feedback are almost completely useless except for the most diligent students, because most students barely remember what they did a few classes ago, never mind the chain of logic they used to complete the work.

This is where digital systems shine and help address the main problem of exploratory activities like laboratory exercises … they can provide meaningful feedback to students based on what they are actually doing when they need the feedback the most. About a month into my courses, I was able to switch two of my lab courses to digital activities built in an intelligent tutoring system (ITS). This accomplished one of the primary goals that I had for the lab activities, which was to provide students with instant feedback on what they were doing, even when I couldn’t personally provide it. An ITS excels in this by essentially multiplying me for all my students as if I was working right beside them during the entire activity.

More importantly, I wanted students to be stopped dead in their tracks if they didn’t know what they were doing. This is a critical component of my preferred learning design as it forces students to stop, discuss, and ask questions. Paper labs don’t allow for that, allowing students to run off into un-reality because their lack of understanding can’t be caught as they move from question to question. But digital systems allow for this kind of learning structure. They even allow for an “infinite loop” structure, where a student loops through the same activity as long as the misunderstanding persists. In a particularly interesting moment in one of my classes, I watched as a group that was especially prone to shallow thinking became trapped in one of my infinite loops, running through it three times before the learning actually happened. Upon realizing what was happening and that neither Google nor I were going to help them, they began to discuss what they had already tried, what patterns they had observed, divided up the labor to try a few more permutations, and then logically reasoned their way to the correct solution.

This is a digital learning design that doesn’t seem to be especially popular. Instead, I’ve observed that many learning designs prioritize student comfort over learning. In the most frequent adaptations of my designs, I find people following the “three strikes and you get a free pass” structure combined with a point penalty for using the bypass. This is a system that allows students to avoid material they don’t understand and sends them into more complex material that they are then unprepared for. But student frustration (within reason) is a critical part of the learning process and I find that by triggering it with learning designs where students can’t simply exhaust the patience of the system, students engage more with each other and with me to figure out how to continue, leading to deeper understanding.

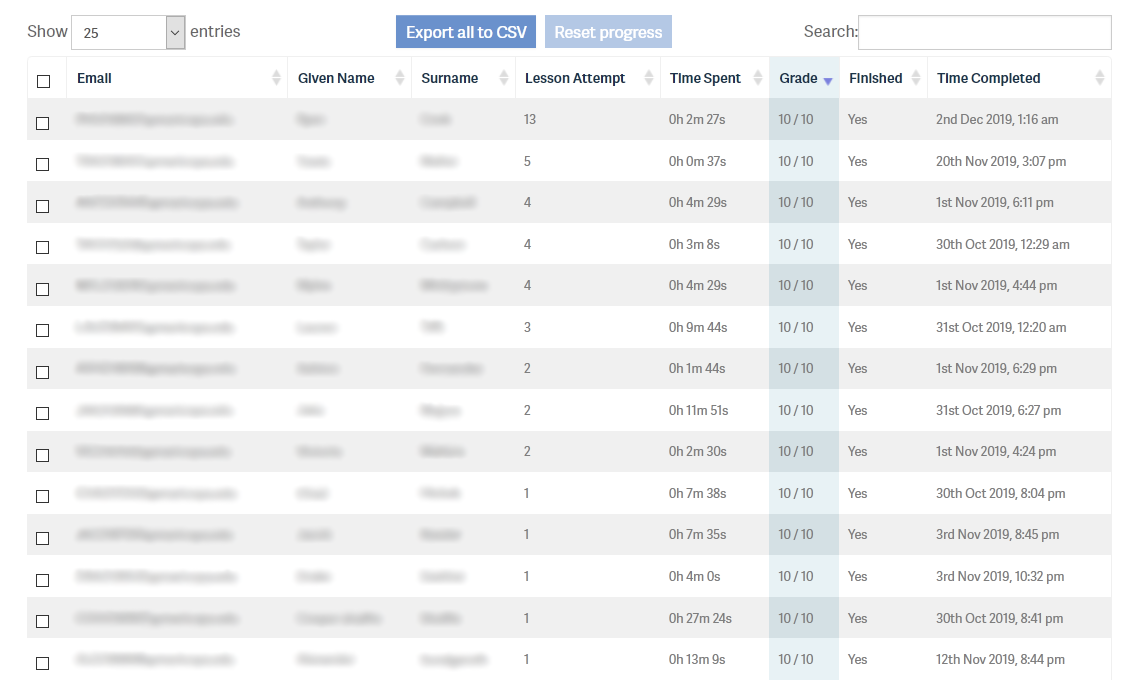

In the end, the switch to digital labs was successful. Many students who generally knew what they were doing received helpful assistance when they needed it, while the students who really didn’t understand what was happening engaged with me and their peers to try to figure out what they needed to do. As an extra bonus, students could rerun the same ITS activities dozens of times to perfect their score and mastery without giving me additional grading work. Overall, students reported being more engaged, despite often being aggravated with a particularly difficult topic. But students can be prepared for this as well, if they are informed in advance that frustration is normal during learning and simply means that how they think the world works is colliding with how the world actually works and that this discrepancy needs to be resolved through discussion and questions. As a result, in my classes the frustration never boiled over and for many students, triumph over a particularly challenging concept became a memorable moment that they recounted weeks later.

Sample analytics from the CGC Intro to Astronomy class, Fall 2019. Some students are able to immediately solve the required problem sets. Others take more attempts and more time, but eventually get there as well.

Notes for Practice

Digitizing Labs

Intelligent tutoring systems (ITS) can provide instant feedback on what students are doing. This is preferable to providing written feedback days or weeks later in a pen-and-paper setting (which is also extremely repetitive and time-consuming for instructors).

Create moments of student frustration to force engagement with peers and instructor. Avoid free passes and bypasses through difficult content, as this leaves students less prepared to tackle more complex content.

Utilize the tension between student preconceptions and reality on a topic to drive frustration. Avoid designing difficult activities simply to frustrate students.

Consider infinite do-overs for full credit. There isn’t a particularly logical reason for requiring a student to master a concept on their first attempt and ITS’s allow infinite do-overs without additional grading work for instructors.